In many fields like medicine and financial services, the owners of data are bound by regulatory restrictions around data privacy. That can be a real inhibitor to bringing larger data sets together, which in turn limits how much we can learn from that data. To tackle these issues Intel Labs has been making advances in Confidential Computing and Federated Learning.

Speaking at Intel Labs Day on December 3, 2020, Jason Martin, Principal Engineer, Secure Intelligence at Intel Labs, explained Intel’s Confidential Computing initiative.

“Today encryption is being used to protect data while it is being sent across the network and while it is stored. But data can still be vulnerable when it is being used. Confidential Computing allows data to be protected while in use,” said Martin.

There are three tenets to Intel Labs’ Confidential Computing:

- Data confidentiality – to protect secrets from exposure.

- Execution integrity – to protect the computation from being changed.

- Attestation – to verify the hardware and software are genuine, and not fake.

Trusted execution environments provide a mechanism to perform confidential computing. They’re designed to minimize the set of hardware and software you need to trust to keep your data secure.

“To reduce the software that you must rely on, you need to ensure that other applications, or even the operating system, can’t compromise your data, even if malware is present. Think of it as a safe that protects your valuables even from an intruder in the building,” said Martin.

In the early 2000s Intel Labs began research in ways to isolate applications using a combination of hardware access control techniques and encryption in order to provide confidentiality and integrity. The latest example of putting the capabilities of confidentiality, integrity and attestation together, to protect data in use, is Intel Software Guard Extensions.

All this will protect data on a single computer.

But what if you have multiple systems and data sets and with different owners? How can we support multiple parties to collaborate in a secure way with their sensitive data?

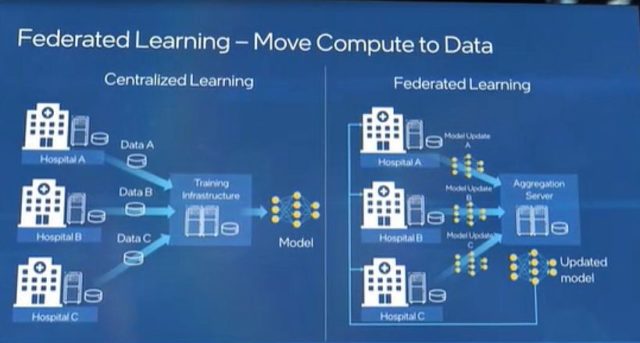

This is where Federated Learning comes in.

What is Federated Learning?

Martin explained: “In many industries such as retail, manufacturing, health care, and financial services, the largest data sets are locked up in what is called data silos. These data silos may exist to address privacy concerns or regulatory challenges, or in some cases, the data is just too large to move. However, these data silos create obstacles, when using machine learning tools to gain valuable insights from the data.”

Take medical imaging, for instance. Machine learning has made advances in identifying key patterns in MRIs such as the location of brain tumors. However, getting multiple entities to collaborate in the processing/computation of the data is an inhibiting factor, due to data privacy concerns. Patient data and medical records are protected by standards like HIPAA.

Intel Labs has been collaborating with the Center for Biomedical Image Computing and Analytics at the University of Pennsylvania Perelman School of Medicine (Penn Medicine) — on federated learning.

“In our federated tumor segmentation project, we are co-developing technology to train artificial intelligence models to identify brain tumors,” informed Martin.

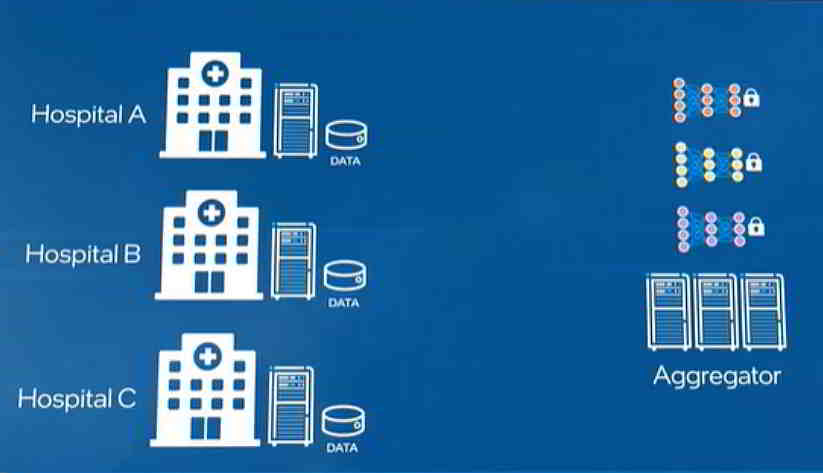

Image Credit: Intel Labs

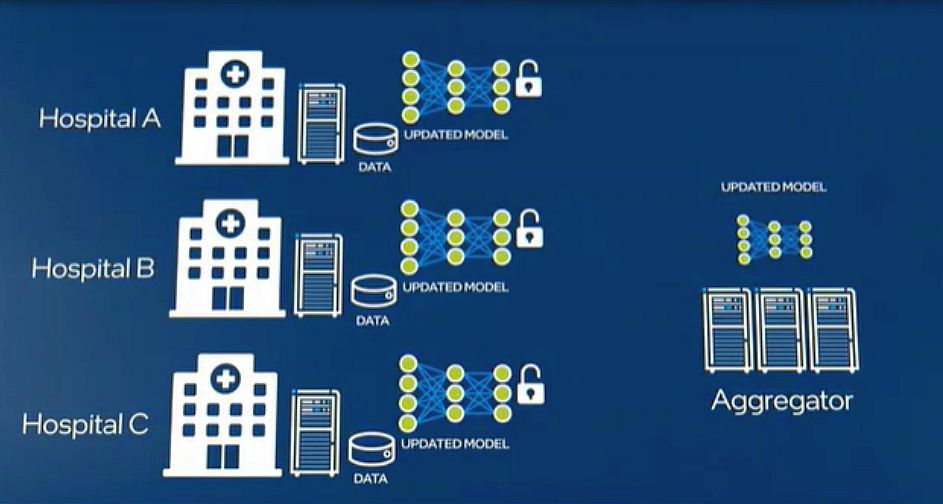

With federated learning, Intel’s scientists can split the computations, such that each hospital trains the local version of the algorithm on their data at the hospital. And the hospitals can send what they learn to a central aggregator. This combines the models from each hospital into a single model without sharing the data.

However, this poses another challenge. When the computation is split in this manner, you increase the risk of tampering with the computation. To tackle this, each hospital uses confidential computing. This protects the confidentiality of the machine learning model. Intel Labs and the hospitals also use integrity and attestation, to ensure that the data and model are not manipulated at the hospital level.

Image Credit: Intel Labs

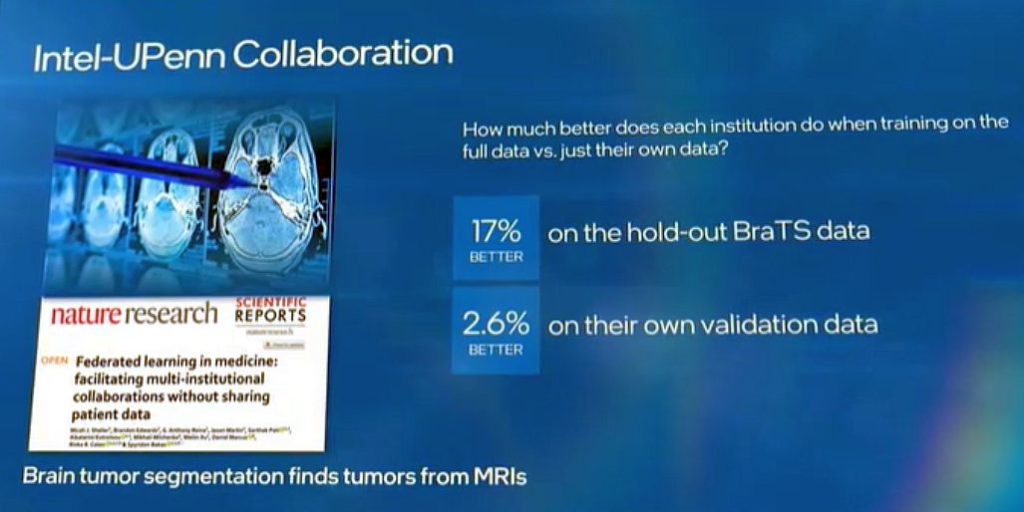

Penn Medicine and Intel Labs published a paper on Federated Learning in the medical imaging domain. The study demonstrated that the federated learning method could train a deep learning model, with 99% accuracy of the same model trained with the traditional non-private method.

The combined research also showed that institutions did on average 17% better when trained in the federation, compared to training with their own validation data (2.6%).

Image Credit: Intel Labs

Work on this continues and will eventually enable a federation of over 40 international health care and research institutions to collaborate on creating new state-of-the-art AI models, without sensitive patient data leaving the hospitals.

Keep track of Intel Labs’ progress on Federated Learning here: Intel Labs Day 2020 | Intel Newsroom

rch also showed that institutions did on average 17% better when trained in the federation, compared to training with their own validation data (2.6%).